This talk situates the art and science of performance analysis (in the cloud), in a framework of reflective practice as outlined in Donald A. Schön's book "The Reflective Practitioner: How Professionals Think in Action" (1983).

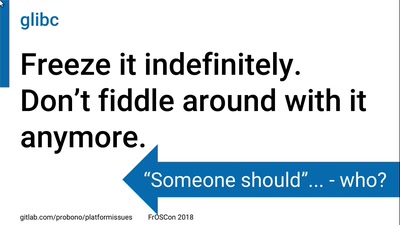

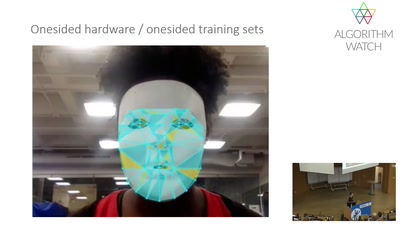

Placing performance analysis in such a framework can help operators transcend an overwhelming spectrum of challenges and focus on work that matters. For eg., its important to see through the common expectations that modern enterprises might have of cloud providers - a plug-and-play mindset, where plugging-in is easy, but where play can sometimes cost millions of dollars in operating cost, raising questions about the cloud's effectiveness as a platform. With such a mindset, performance engineering might be considered unimportant, where it might actually be most useful. But that's only the tip of the iceberg. Once an operator has gathered the grit and resources to continue with performance analysis - the assumptions of fellow engineers, developers and leaders must also be addressed, and a process of edification and implementation should be carried out - sometimes this can be a draining process for a single operator - as it involves answering misinformed skepticism from different directions. Last but not the least - meaningful performance analysis in the cloud might even be a pipe dream for most - as its not easy to get the right information (if at all) from cloud vendor engineers and architects, for deeper questions and investigations around available monitoring data, without which useful performance modeling is impossible.

Nonetheless, the insights gained with such practice can be helpful overall, in defining performance and capacity planning strategies. In this talk specifically, many anecdotes about various software tools and enterprise application platforms will be discussed to illustrate the points mentioned above, which can be used by operators to make improvements in their environment. - for eg.,

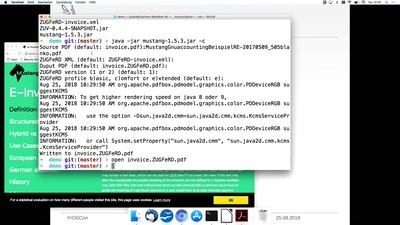

* How to model disk usage for a popular big data software - with a queuing model, or linear regression? If using a queueing model, does your cloud vendor provide all the metrics? Are those metrics really what they appear? Does your operating system agree?

* Metrics, metrics everywhere, but oops! there's a performance issue with the central metrics system. Metrics, metrics nowhere?

* Spot is cheap, but show me the scaling metric.

* Concurrency in serverless apps.

* etc.