eng

Help us to improve these subtitles!

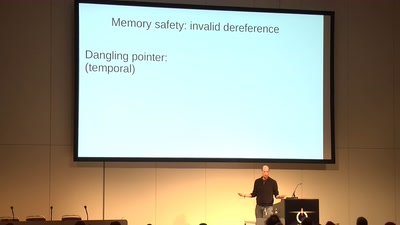

Computers have become ubiquitous and essential, but they remain massively error-prone and insecure - as if we were back in the early days of the industrial revolution, with steam engines exploding left, right, and centre. Why is this, and can we do better? Is it science, engineering, craft, or bodgery?

I'll talk about attempts to mix better engineering methods from a cocktail of empiricism and logic, with examples from network protocols, programming languages, and (especially) the concurrency behaviour of programming languages and multiprocessors (from the ARMs in your phone to x86 and IBM Power servers), together with dealings with architects and language standards groups.